Making Epidemiology Make Sense For Clinicians

I discovered epidemiology through an interest in evidence-based practice and clinical research. Seeing patients brought up research questions, and I wanted to be able to answer those with numbers. What I learned is that our results differed from the few published studies that crafted the informal, “word on the street” guidelines we abided by, not because their research was flawed, but because our patient populations were different. Had the situation been two Table 1’s side by side, we would’ve seen the clear demographic differences.

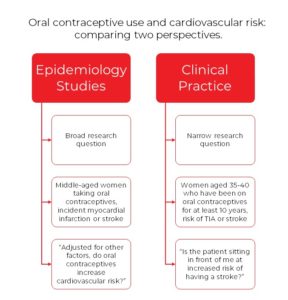

Hannaford and Owen-Smith did a proof-of-concept literature search in 1998 to see how many population studies (epidemiologic studies) provided relevant data to answer their specific clinical question. There are a few points of comparison between epidemiology and clinical practice here:

So, this is where adjustment versus stratification comes in. Multivariable adjustment is a statistical method that attempts to isolate the effect of our exposure (oral contraceptives) on the outcome (cardiovascular risk). We often adjust for factors related to both, because we don’t want a relationship such as age (younger women more likely to be on oral contraceptives and are at decreased risk of cardiovascular events compared to older women) to secretly be explaining a statistical association. Specifically, Hannaford and Owen-Smith note that “in effect, these adjustments level the epidemiological playing field so that the real effects of combined oral contraceptives can be determined, but at a cost of losing information about the effects of the adjusting factor (in this case smoking) among contraceptive users.” There are many other ways to control for confounding, such as randomization, restriction, matching, stratification, and of course, adjustment. But more often than not in epidemiology, we use adjustment because it’s answering our question.

The clinical mind searches for subgroup analysis as the most efficient way to answer the question “What about my patient?” Such as – “What about men? Women? Comorbidities?” without having to calculate a beta coefficient (given the authors even provided it). In other words, instead of smoothing out the data over all possible groups (smokers, those with diabetes, etc.), we want to plot individual points on the graph.

Hannaford and Owen didn’t find many epidemiologic studies that answered their very specific clinical question in 1998 – hopefully the odds would be higher 20 years later. But, compromising epidemiologic methods or clinical methods isn’t the answer to meet in the middle. So, what can we do? Epidemiology provides methods to systematically think about patterns and causes of disease for the clinician. Many of my colleagues are physicians seeking additional research training. They compare anesthesia protocols for outcomes after colon cancer surgeries, while my academic colleagues look at cumulative environmental exposures and lifetime risk of colon cancer. The overarching topics are similar, but the questions and resulting methods are incredibly different.

How do we make population studies more relevant to clinicians? There are many ways, and I’d love to hear your thoughts, but some to get us started include: interdisciplinary teams of epidemiologist and clinicians when designing studies and analyses; utilizing different but valid methods such as stratification with or instead of adjustment (and powering our studies for subgroup analysis), and…what else?

Bailey DeBarmore is a cardiovascular epidemiology PhD student at the University of North Carolina at Chapel Hill. Her research focuses on diabetes, stroke, and heart failure. She tweets @BaileyDeBarmore and blogs at baileydebarmore.com. Find her on LinkedIn and Facebook.